In this brief article, we’ll try to identify, on a high level, the major architectural components of a DataMiner System and make a number of recommendations to allow such a system to scale horizontally whilst offering maximum flexibility and data resilience.

DataMiner Agent (DMA)

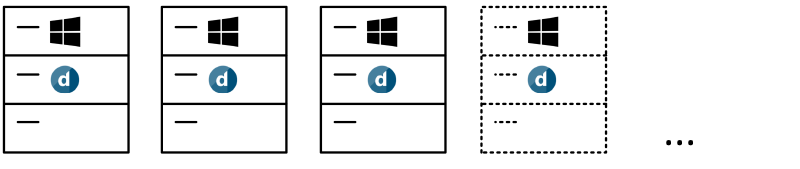

A DMA, or DataMiner Agent, is a single instance of a fully functioning DataMiner System. Depending on its installed license, it will offer a certain set of functionalities and capabilities. The main function of a DataMiner Agent is to communicate with any type of data source out there and process this data to allow operations on a higher level (alarm monitoring, data storage, service and resource management, etc.). On a high level, you can consider a DMA to be a fully functional processing node, and in case we would like to scale our processing capacity, we can simply do so by combining more of these processing nodes into a cluster, as such creating a DataMiner System consisting of a number of DataMiner Agents.

Each DMA instance has to be hosted on a server instance complying with the minimum system requirements and running a recent Windows Server OS version. This server instance can be hosted either on barebone hardware or on a virtualized/cloud-based infrastructure.

DataMiner Cassandra node

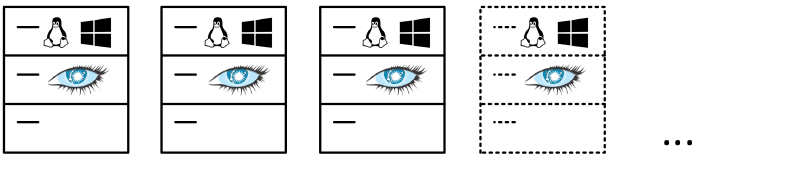

A DataMiner Cassandra node is a single instance of a Cassandra node, which can operate as either a single-node Cassandra cluster or a multi-node Cassandra cluster. Each DataMiner Agent requires its own local Cassandra cluster to serve as its local database. In case of a pair of DMAs in a Failover setup (1 + 1) or in situations where a single DMA requires a very large local database, the local Cassandra cluster will consist of more than one node. We can scale the capacity of a local database by simply adding more Cassandra nodes to the Cassandra cluster serving as local database. As the number of DataMiner Agents increase, the number of Cassandra clusters will increase along with it.

The local Cassandra database will serve as a repository for configuration, historical parameter and alarm data.

Data resilience is maintained at Cassandra-node level as all data written to the Cassandra cluster will, by default, have a replication factor of 2. This ensures that a copy of the data will always be present on another node of the local Cassandra cluster. In order to leverage this functionality, you do require to set up a multi-node Cassandra cluster as local database. A single-node Cassandra cluster will not offer this data resilience.

Each Cassandra node instance has to be hosted on a server instance complying with the minimum system requirements and running a recent Windows Server or Linux Server OS version supporting Cassandra. This server instance can be hosted either on barebone hardware or on a virtualized/cloud-based infrastructure.

NOTE:

- All nodes within a Cassandra cluster need to run the same server OS and preferably have the same system specifications.

- By the end of 2020, we will start supporting an additional local database architecture where all DMAs in a DataMiner System can share a single local database (i.e. a Cassandra cluster).

- DataMiner will also support integration with Cassandra as a Service as soon as this feature is officially released by the public cloud providers (AWS, Azure, etc.).

- Currently, DataMiner supports Cassandra versions 3.7 and 3.11.

DataMiner Elasticsearch node

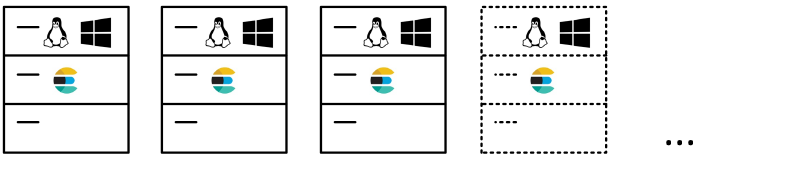

A DataMiner Elasticsearch node is a single instance of an Elasticsearch node that can operate as either a single-node Elasticsearch cluster or a multi-node Elasticsearch cluster. Having your DataMiner System connected to an Elasticsearch cluster is currently still an optional feature, but it is mandatory if you want to have access to specific DataMiner functionality such as Jobs, DataMiner SRM, enhanced alarm searching, etc. All DMAs in a DataMiner System are expected to connect to the same Elasticsearch cluster. We can scale the capacity of an Elasticsearch cluster by simply adding more Elasticsearch nodes to the Elasticsearch cluster. Scaling the DataMiner System can be done independently from scaling the Elasticsearch cluster.

Currently, an Elasticsearch store hosts configuration, logger table and alarm data, but this might be further expanded in the future.

Elasticsearch manages data resilience in a similar way as Cassandra, i.e. by replicating the data to more than one node in the cluster. By making the data available on more than one node, you will always survive the loss of a single node in the cluster without suffering any data loss. However, a single-node Elasticsearch system will not offer this data resilience. You will need at least a three-node cluster in order to obtain both data resilience and an increase in overall Elasticsearch capacity.

Each Elasticsearch node instance has to be hosted on a server instance complying with the minimum system requirements and running a recent Windows Server or Linux Server OS version supporting Elasticsearch. This server instance can be hosted either on barebone hardware or on a virtualized/cloud-based infrastructure.

An Elasticsearch architecture (master, coordinator, data nodes) can be tailored to the exact requirements of the system implementation. By default, there will be a standard configuration made available.

NOTE:

- All data nodes within an Elasticsearch cluster need to run the same server OS and preferably have the same system specifications.

- DataMiner will also support integration with Elasticsearch as a Service when provided by the public cloud providers (AWS, Azure, etc.).

- Currently, DataMiner supports Elasticsearch version 6.8.

Conclusion

As you have probably noticed, lots of architectural solutions can already be developed once you decide which components will be hosted where. Each of those solutions will have benefits as well as downsides, and it will always be a case of tailoring the solution to your own requirements taking all aspects into consideration.

However, there is one general best practice rule which helps you avoid most of the pitfalls.

For instance, yes you can opt to host a DMA, a Cassandra node and an Elasticsearch node on one server instance hosted on either barebone hardware or in a virtualized environment. However, this does mean that a lot of the hardware resources will need to be shared between those three components and that you might run into situations where one component will prevent the other components from using mandatory CPU, memory or disk resources as you are scaling up your solution.

In order to completely avoid this from happening, the best practice here would be to always separate these components and run them on their own server instance. This will offer you the maximum flexibility and agility when scaling on component level (DMS, Cassandra, Elasticsearch). It will prevent you from each time having to scale multiple components at the same time and any of the components from impacting each other on a system resource level at runtime.

Hopefully, this short article will help you in your architectural decisions.

Great overview with good insights that are important to consider when you set up a DataMiner System.

great information.