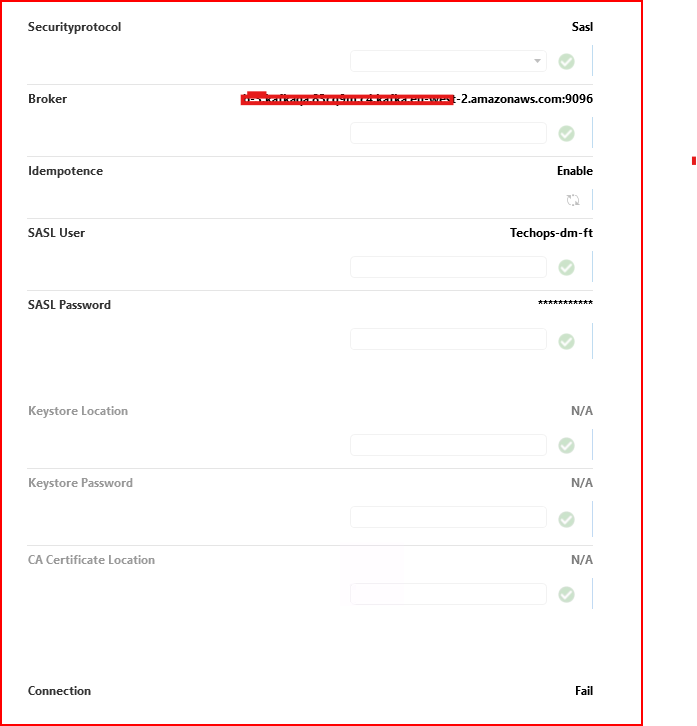

We’re having trouble getting the Generic Kafka Producer to start. Our Generic Kafka Consumer works fine using the same broker address and same SASL/PLAIN credentials, but the Producer fails during startup with:

<code>Confluent.Kafka.KafkaException: Local: Broker transport failure (occurs during AdminClient.GetMetadata()) </code>

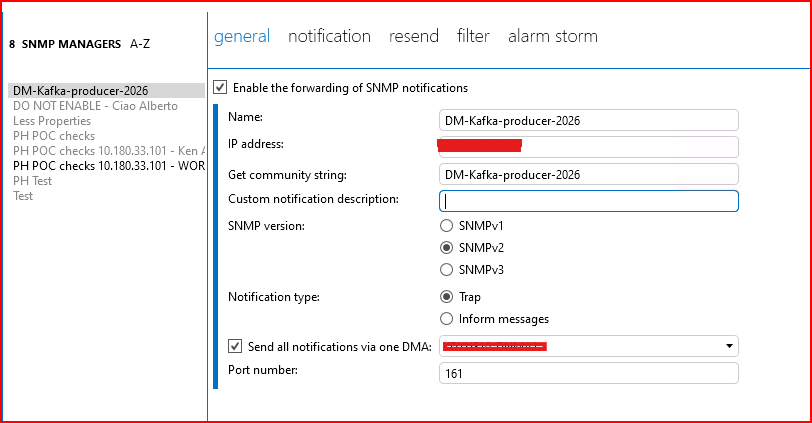

In Cube, we also configured an SNMP Manager (Apps → System Center → SNMP Forwarding) and selected that forwarder when creating the Producer element — but the Kafka connection still fails. We’re unclear what that SNMP Manager setting is actually used for in the context of the Kafka Producer.

Hi

It could be related to firewall/DNS between DataMiner and the Kafka broker(s). Please ensure the broker listener on port 9096 (listeners/advertised.listeners, including protocol mapping) matches the DataMiner client settings. Also, please confirm the broker provider/network allows connections from our server public IP(s)/network to the Kafka brokers on 9096, and that the broker advertises endpoints that are reachable from DataMiner (not only the bootstrap address).

Standard SNMP Forwarding functionality is used to filter the alarms. On each DataMiner agent, at least one SNMP Manager shall be created.

In the SNMP Manager, you can:

- Define which alarm information should be forwarded (using the custom binding OIDs)

- Define which alarms should be forwarded (via filtering rules)

The SNMP Manager then sends the selected alarm information as SNMP Inform messages over the loopback interface to the Kafka element.

Regards

The consumer working with the same bootstrap server and SASL/PLAIN credentials confirms that the initial connection/authentication path to at least one broker is at least partly valid, but it does not rule out a later broker reachability issue. Kafka uses bootstrap.servers to establish the initial connection and discover the cluster, and clients then connect to broker addresses returned in metadata from advertised.listeners. Since the Kafka producer explicitly calls AdminClient.GetMetadata() at startup, it can fail there if one or more of those broker addresses is not reachable or resolvable from the connector host.

The main thing to verify is that the advertised.listeners are reachable from the DataMiner server and match the listener/security configuration used by the producer.

As a side note, the Kafka Producer works together with the SNMP forwarding module, acting as a trap receiver that formats DataMiner alarm traps into JSON and pushes them to a Kafka topic. This means that messages are forwarded to Kafka only after a successful connection.

Regards

Thank you Tiago for your response – We have been checked our port 9096 and its able to connect to the broker however for the consumer protocol – but the same broker we are not able to connect with the producer protocol.

We configured a SNMP forwarder loop back on the same dma where the kafka element is hosting and using the default OID bindings- IS there any other steps we might take to see the connection not failing ? Thank you in advance