Partners

System Integrators

The role of system integrators has changed in the recent past, following important technology transitions such as migration to all-IP interconnectivity, network function virtualization (NFV) and cloudification. Much more than before, your media and broadband customers expect best-of-breed network designs that bring agility, flexibility and security into the operation.

Rather than designing systems, SI are challenged to design systems that are composed of much more functions originating from a vast number of suppliers. Those functions are numerous, complex and fast changing.

Let’s take an example: think about what used to be a ‘complex video compression encoder’, or a ‘big Cable Modem Termination System (CMTS)’. Such a product was thought of to be complex and advanced. However, it did come with a fully documented and detailed manual, an exhaustive datasheet listing the exact specifications, supported standards, and performance limitations. Furthermore, you could order that box from a single supplier, whom you could also call upon to get support, troubleshoot and get updates of the software.

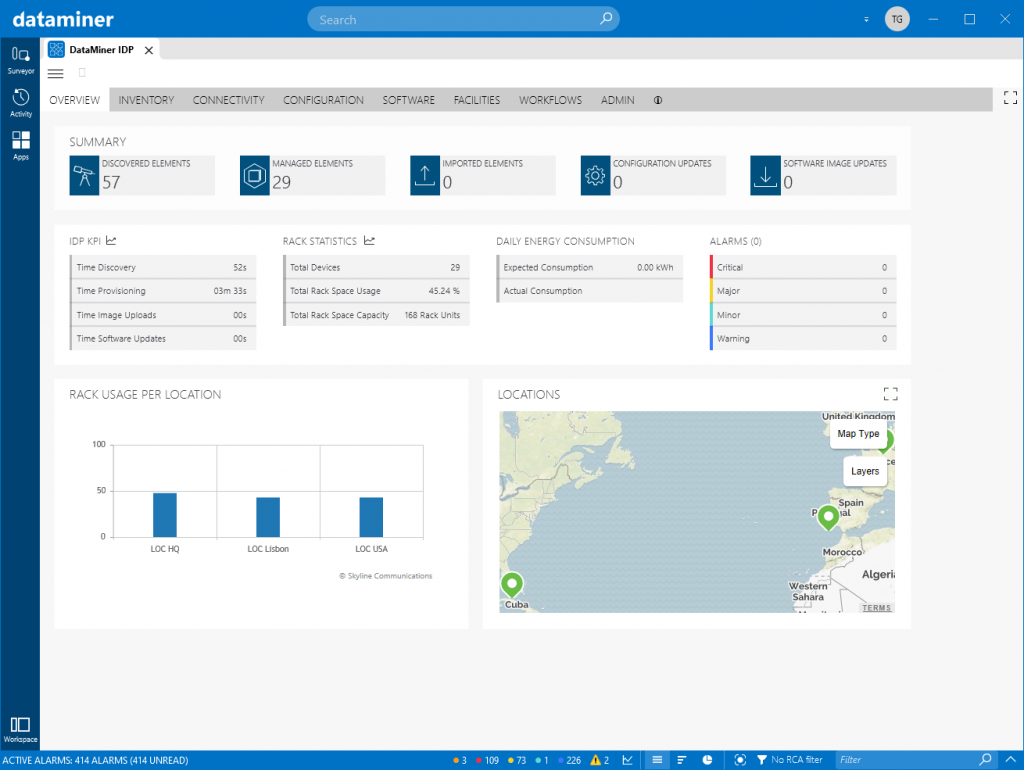

Let’s now look into the new situation. Rather than receiving a box, you receive URLs to various ‘micro services’ you can download. That is still easy. But then… you need to go and look for an OS and a container environment to deploy those containers upon. Do you take open source? Probably not, so you go and have to get to another supplier for your container environment. And the OS. And same goes for your hardware required for compute, storage and networking. The question is: how much of all of that you need for the project? The most common answer you get from your suppliers is: ‘depends on the performance of the other supplier’. The second common answer you get is ‘depends on the quality your end customer expects’. Soon, you’ll miss the times during which you had a single manual, a single spec sheet, and a single telephone number to call. This is just an illustration of how the systems integration business is evolving. This also illustrates the strategic importance of DataMiner in multi-vendor installations. Rather than having to swivel-chair, DataMiner brings the source of truth on a single screen, across all the technologies in the system. With DataMiner, you have a single place to manage various software images from different vendors and automate full system updates. And with that come many other use cases: correlating events and performance across the entire stack, including PNI, VNI and cloud. Orchestrating services end-to-end while keeping resource planning and reservations on all layers of the deployment. Those are just a few examples that depict the strategic importance of deploying DataMiner in a multi-vendor operation, and the importance to have such a powerful platform in your customers’ operations during system deployment, configuration, dry runs, field tests and production phases.